If your team sends every request to GPT-5 or Claude Opus 4.7, you are almost certainly overpaying. Not by a little. By roughly 40 percent on a typical production workload, and sometimes considerably more.

That is not a marketing line. Enterprise LLM spend jumped from $3.5 billion in late 2024 to $8.4 billion by mid-2025, and most teams running those bills have no control layer between their application and the model providers. Every request gets the frontier treatment, whether it needs it or not. Every outage takes production down. Every duplicate question burns a fresh set of tokens.

An AI gateway fixes this. Not magically, and the actual savings range from 30 to 50 percent depending on workload, but reliably enough that it is now standard infrastructure for any serious production deployment. This piece explains what an AI gateway actually does, where the savings come from, and how to figure out where your own workload will land in that range.

What an AI gateway actually is

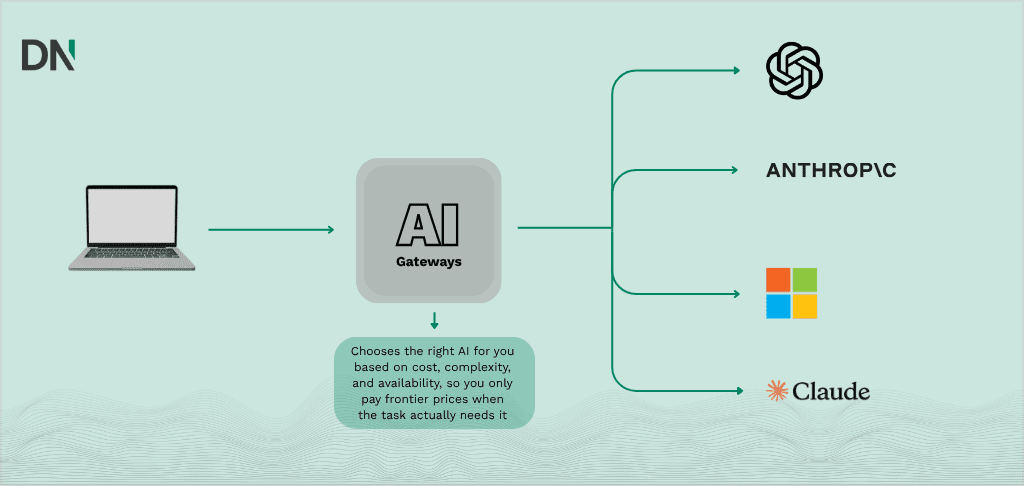

An AI gateway is a single control layer that sits between your application code and your LLM providers. Instead of your app calling OpenAI directly, it calls the gateway, which then decides where the request should go.

That sounds like a trivial piece of plumbing. It is not. Because the gateway sees every request, it can do things no individual application can:

Route each request to the cheapest model that can handle it. Cache answers to questions that have already been asked. Fall back to a second provider when the first one is down. Enforce per-team and per-project budgets. Log and measure what is actually being spent, by whom, on what.

None of this is new infrastructure thinking. Anyone who has run an API gateway like Kong or an edge layer like Cloudflare will recognise the pattern. The novelty is that LLM workloads have cost and latency characteristics that make a control layer much more valuable than it is for a normal rest api. A single misrouted Claude Opus call can cost more than ten thousand Redis lookups.

Where the 40% actually comes from

The “cut costs by 40%” claim is real, and it is the compound result of stacking three independent techniques. No single one gets you there. Each one is worth understanding on its own merits, and each one has a published benchmark range that is worth taking seriously rather than trusting the marketing.

1. Model routing by complexity

This is the biggest single lever, and it is the one most teams leave on the table.

Not every request needs a frontier model. A classification task, a short extraction, a formatting job, a simple FAQ answer, these can be handled by a smaller and cheaper model with no measurable quality loss. Research from SciForce found that hybrid routing systems, which send basic requests through lighter models and reserve frontier models for complex reasoning, achieve 37 to 46 percent reductions in overall LLM usage while delivering 32 to 38 percent faster responses on simple queries.

The gateway makes this practical. You define the routing rules once, in configuration, and your application code stays identical. Send everything to the gateway, and the gateway decides whether this particular request goes to Haiku, Sonnet, or Opus. Or to GPT-5 Nano versus GPT-5. Or to a local Qwen3 running on a GB10 box in your own datacenter for anything privacy sensitive.

The honest caveat: routing is only as good as your classification logic. A gateway that routes blindly on string length or keyword matches will eventually send a complex reasoning task to a 3B parameter model and produce nonsense. The good implementations use an evaluation pass, a lightweight classifier model, or explicit per-endpoint rules. The point is that the gateway gives you the place to put that logic. Without it, the only routing you have is “whatever my developer typed into the SDK six months ago”.

2. Semantic caching

A large portion of production traffic is semantically redundant. A customer support bot answering “How do I reset my password?” processes nearly identical intent whether the user types “password reset help”, “I forgot my login credentials”, or “cannot get into my account”. Each variation triggers a fresh API call under naive infrastructure. Each one burns tokens.

Semantic caching solves this by comparing the meaning of incoming requests against previously cached ones using vector embeddings and cosine similarity. When a match exceeds a configurable threshold (typically 0.90 to 0.95), the cached response is returned instantly. No LLM call. Cache hits come back in under 5 milliseconds instead of 2 to 5 seconds for a full inference.

The numbers here vary wildly by use case, and the marketing is often dishonest about the realistic ceiling. Published production hit rates are 20 to 45 percent, not the 90-plus you sometimes see quoted. Classification tasks cache well, in the 40 to 60 percent range. Open-ended chat caches poorly, 10 to 20 percent. RAG use cases tend to land around 20 percent. But even a 20 percent hit rate on a €5,000 monthly bill saves €1,000 per month, and the cache infrastructure itself costs under 5 percent of the savings.

The best implementations run two layers. An exact-match hash check first (sub-millisecond, zero risk of wrong answers), and then a vector similarity search only if the exact check misses. This keeps the fast path fast and only pays the embedding cost when it might actually help.

One pitfall worth naming: context leakage. If user A asks a question containing private PII and user B asks something semantically similar, a naive cache will serve A’s answer to B. Any production gateway handling sensitive data needs namespace separation per user or tenant. This is not optional.

3. Fallbacks, load balancing, and provider arbitrage

The third savings lever is less obvious but real. When you route through a gateway, you are no longer locked to one provider’s pricing or availability. If OpenAI has a regional outage at 2 AM (which happened to a lot of teams in 2024 and again in 2025), your gateway automatically routes to Anthropic or Bedrock or Vertex, and your users never notice.

But fallback is also a cost lever. The same Llama model might be available from five different providers at wildly different prices. A gateway with genuine multi-provider support can route to the cheapest healthy endpoint that meets your quality and latency requirements, and can shift traffic dynamically as prices change. For models hosted by multiple infrastructure providers, price differences of 2x or more are common.

Add it up, and do the math honestly. Hybrid routing on a mixed workload cuts usage by roughly 40 percent. Semantic caching on what remains adds another 20 to 25 percent reduction on that remainder (not on the original total). Provider arbitrage on what is left contributes another 10 percent or so. Compounded, that is 0.60 × 0.78 × 0.90, which comes out to around 42 percent off the original bill. That is where the headline number comes from.

The 50 percent ceiling sometimes quoted in vendor material is achievable, but only for workloads that cache unusually well (support bots, FAQ, classification-heavy pipelines) or that have particularly strong routing opportunities (a lot of simple requests currently going to frontier models). The 30 percent floor is what you hit on workloads dominated by unique long-form generation, where semantic caching contributes almost nothing and your savings come almost entirely from routing.

What an AI gateway gives you besides savings

The cost story is the easy sell, but the operational story is why gateways are becoming table stakes.

You get observability. Without a gateway, nobody knows exactly who is spending what on which model. With a gateway, you get per-team, per-project, per-developer spend attribution in real time.

You get budget enforcement. Hard caps per team per month. Soft alerts at 80 percent. The ability to cut off a runaway agent loop before it burns through a quarter’s budget in a weekend.

You get provider independence. Your application code is no longer married to OpenAI’s SDK or Anthropic’s message format. The gateway exposes one OpenAI-compatible API, and you swap providers behind it without touching application code.

You get a place to enforce governance. Data loss prevention rules, PII redaction, audit logs for compliance (SOC 2, GDPR, AI Act), prompt filtering. All centralised. One place to update a policy instead of twenty.

And you get fallback reliability. The kind of reliability that means you sleep through the next provider outage instead of getting paged.

The current AI gateway landscape

The market has consolidated enough in 2026 that the choice depends less on features and more on where you already operate and what scale you are at.

LiteLLM remains the default open-source option. Python-based, self-hostable, supports 100-plus providers through an OpenAI-compatible API, large developer community. It is the right starting point if you are small, Python-heavy, and want maximum flexibility. The trade-offs: Python runtime adds measurable per-request latency compared to compiled alternatives, and the project had a supply chain security incident in early 2026 that made some enterprise teams nervous about the PyPI distribution model.

Cloudflare AI Gateway is the zero-setup option if you already run on Cloudflare. It lives on the edge network, the free tier covers analytics and basic caching, and there is no infrastructure to manage. The limit is that it does exact-match caching only, not semantic, so the caching savings are lower than what a proper semantic cache delivers.

Vercel AI Gateway is the natural choice for Next.js teams who already deploy on Vercel. Tight integration with the Vercel AI SDK, frontend-friendly. Teams that need advanced cost controls or semantic caching outgrow it quickly.

Kong AI Gateway makes sense if you already run Kong for your regular API gateway. It extends your existing governance and rate limiting policies to LLM traffic through AI-specific plugins. Semantic caching and token-based rate limiting are in the enterprise tier.

Portkey is the product to look at if cost optimisation is your primary driver. Strong semantic caching, good multi-provider routing, production-tested observability.

Bifrost (open source, written in Go by Maxim AI) targets the high-throughput enterprise end. 11 microseconds of gateway overhead per request at 5,000 requests per second on a single instance, dual-layer caching, hierarchical budgets. Worth a look if you are running serious scale or evaluating for enterprise governance.

OpenRouter is closer to a model marketplace than a gateway, with 300-plus models from 60-plus providers under one API. Excellent for prototyping and for teams who want broad model access without running infrastructure. Governance and self-hosting are limited.

There are others (Helicone, Inworld Router, TrueFoundry, ngrok AI Gateway, Hyperion), each with their angle. The meta-point is that there is no universal winner. Pick the one that fits how you already deploy.

How to think about whether you need one

A simple test. If more than one of the following is true, you need a gateway:

Your monthly LLM spend is above €500 and climbing. You run in production and an LLM outage has real business consequences. You use more than one model provider, or expect to within twelve months. You have more than one team or project sharing LLM infrastructure. You need audit logs, PII handling, or compliance reporting.

If none of those apply, you probably do not need a gateway yet. A single SDK call to OpenAI or Anthropic is fine for prototyping. The moment you move to production with real users and real billing, the calculation flips fast. If you are unsure where your workload sits on this list, a short AI Feasibility Assessment is a reasonable way to get a concrete answer before committing to infrastructure.

The pragmatic starting point

For most teams, the sensible path looks like this. Start with a managed gateway (Cloudflare if you are already there, Vercel if you are on Next.js, Portkey or Bifrost Cloud otherwise) to get routing, caching, and observability live in a week. Instrument your traffic. Look at what your actual cache hit rate is, what the distribution of request complexity looks like, and where your spend is concentrated.

Then, and only then, start tuning. Set routing rules for the categories of request that clearly do not need a frontier model. Enable semantic caching for the endpoints where intent is repetitive (support, FAQ, classification). Add budget guardrails per team. Measure the before and after. Teams that want hands-on support walking through this tuning process in their own environment can bring in outside help through AI Consultancy, which is the kind of work that tends to pay for itself within the first billing cycle.

If your savings hit 25 percent in the first month, you are on track. If they hit 40 percent by month three, you have done the work properly. If you land above 50 percent, your workload caches unusually well and you should count yourself lucky. If you are below 20 percent after six months of real effort, your workload is probably in the minority that does not benefit much from caching (lots of unique long-form generation, for example), and your savings will come almost entirely from routing and provider arbitrage instead.

The headline number is a target, not a guarantee. The infrastructure pattern is the point. Once the gateway is in place, every future optimization gets cheaper to implement, every new provider becomes plug-and-play, and every outage becomes a non-event. That is the real value. The 40 percent is just what you notice first on the invoice.

Frequently asked questions

Is an AI gateway the same thing as an API gateway like Kong or AWS API Gateway?

No. A traditional API gateway handles routing, authentication, and rate limiting for REST endpoints. An AI gateway does all of that, but adds the pieces that matter for LLM traffic specifically: semantic caching, model-aware routing, token-based budgets, multi-provider fallback, and per-prompt observability. Kong has added AI-specific plugins to bridge this gap, but most dedicated AI gateways treat LLM traffic as a first-class workload rather than an add-on.

Will routing requests to smaller models hurt quality?

Only if the routing logic is careless. A gateway that routes blindly on string length will eventually send a complex reasoning task to a 3B parameter model and produce nonsense. A properly configured gateway uses either explicit per-endpoint rules (this endpoint always goes to Haiku, that one always to Opus) or a lightweight classifier to decide. The quality trade-off is manageable, but it is not automatic. Plan to evaluate before and after.

How long does it take to deploy a gateway in production?

For a managed option like Cloudflare AI Gateway, Vercel, or Portkey, you can be live in a day. You change your base URL and API key, and you are routing through the gateway. For a self-hosted option like LiteLLM or Bifrost, plan a week to get the infrastructure, observability, and initial routing rules in place. Getting real savings out of the gateway takes longer, typically one to three months of tuning routing rules and caching thresholds based on actual traffic.

What about data privacy and the EU AI Act?

A gateway is actually helpful here. Because all LLM traffic flows through one control point, you have a single place to enforce PII redaction, audit logging, and data residency rules. For workloads that cannot leave EU infrastructure, pair the gateway with a self-hosted model (for example Qwen or Llama running on local hardware) and route privacy-sensitive traffic there while sending non-sensitive traffic to frontier providers. The gateway makes that split practical.

Do I need a gateway if I only use one provider?

If you are sure you will never use a second provider, the cost-savings case is weaker. You still get semantic caching, budget controls, and observability, which are worth something. But if your main concern is lock-in avoidance and fallback reliability, a gateway only delivers that value when you actually have multi-provider coverage configured.

What does a gateway cost?

Managed gateways typically charge either a percentage of your LLM spend (1 to 5 percent is common) or a flat monthly fee based on request volume. Self-hosted options like LiteLLM and Bifrost are free to run, but you pay in infrastructure and ops time. For most teams, the gateway cost is well under 10 percent of the savings it produces. If it is not, you picked the wrong gateway.