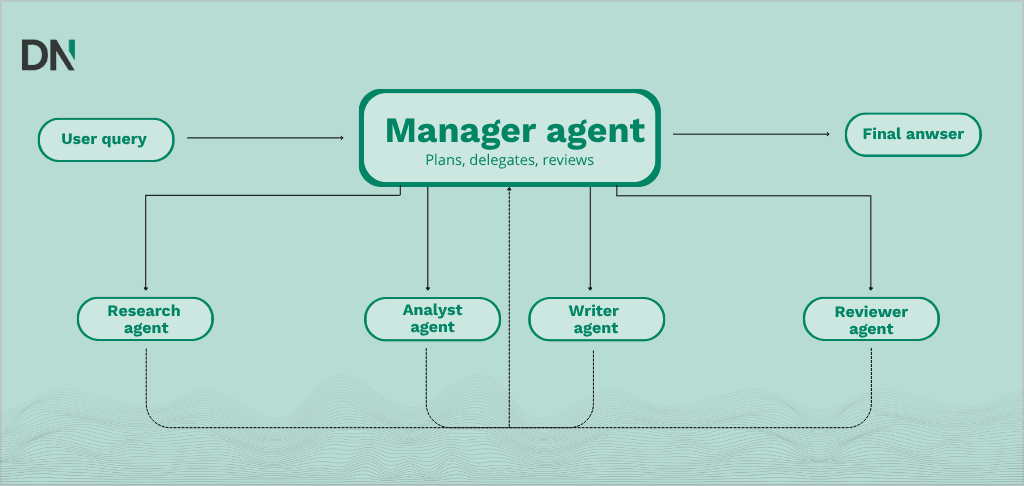

A multi-agent AI system (MAS) is an architecture in which multiple specialized AI agents, each with a defined role and toolset, work together under an orchestrator to complete complex tasks that a single model cannot handle reliably. Instead of one generalist model trying to manage every step of a workflow, a MAS functions like a small digital team: a manager agent breaks down the objective, delegates subtasks to worker agents (such as research, drafting, or compliance), and validates the combined output. This approach improves accuracy, scalability, and auditability, which is why enterprises in 2026 are using multi-agent systems to automate workflows that previously required constant human coordination.

By 2026, this shift toward agentic AI is changing how organizations think about operational efficiency, moving from manual task management to coordinating teams of digital workers.

What are multi-agent AI systems?

A multi-agent AI system is a setup in which several autonomous agents, each with a defined role, specific tools, and clear instructions, work together to solve a problem. Where a generalist chatbot tries to handle every request on its own, a multi-agent system functions more like a small team. An orchestrator or manager agent coordinates the interactions, delegates tasks, and validates the output of specialized worker agents.

Key characteristics include:

- Modularity: each agent is responsible for a specific sub-task, such as data retrieval, code execution, or compliance checks.

- Autonomy: agents make independent decisions within their defined scope and interact with digital tools through APIs.

- Collaboration: agents communicate through structured protocols to share findings, review each other’s work, and converge on an answer.

- Scalability: new capabilities are added by introducing a new specialized agent rather than retraining a central model.

The architecture of AI agent orchestration

Orchestration is the layer that keeps specialized agents working in sync. Without it, agents can produce redundant output, get stuck in loops, or fail to hand off the right context. Four patterns are most common in practice today.

1. Centralized orchestration

A single lead agent acts as the central coordinator. It receives the initial query, breaks it into a plan, and assigns tasks to subordinate agents. This pattern fits workflows that require strict top-down control and predictable outcomes, such as automated financial reporting.

2. Sequential orchestration

Often called a chain or pipeline. Data flows through a fixed linear path: agent A finishes its task, then hands the result to agent B. This is common in content creation pipelines, where a research agent has to finish before a writing agent starts.

3. Hierarchical & Probabilistic orchestration

A multi-layered version of centralized orchestration. Higher-level manager agents oversee groups of specialized agents, which gives you both strategic oversight and task-specific execution. A project manager agent might oversee a development team made up of a coder agent, a reviewer agent, and a tester agent. The difference with centralized orchestration is the number of layers: centralized typically uses one delegation layer, hierarchical uses several. Advanced 2026 implementations now use probabilistic routing, where the system dynamically selects the next best agent based on real-time confidence scores rather than a fixed script.

4. Decentralized (peer-to-peer) orchestration

Agents communicate directly without a central manager. They use shared memory or messaging protocols to pick up tasks based on their capabilities. This pattern is resilient and works well in dynamic environments such as real-time fraud detection.

Technical frameworks for agent orchestration

Choosing the right framework matters as much as the orchestration pattern itself. The market in 2026 is broader than it was two years ago, but a few frameworks come up most often in enterprise projects.

| Framework | Core philosophy | Best use case |

|---|---|---|

| LangGraph | State machine (directed graphs) | Production apps that need precise control and error recovery |

| CrewAI | Role-based collaboration | Rapid prototyping and “human-like” team automation |

| AutoGen / AG2 | Conversation-driven | Scenarios with negotiation or multi-agent debate |

| OpenAI Agents SDK | Tool-first agent loops | Teams already on the OpenAI stack |

| Claude Agent SDK | Tool-first with strong context handling | Teams building on Claude, especially for long-running tasks |

| Microsoft Agent Framework 1.0 | Enterprise-grade .NET/Python | The “grown-up” successor to Semantic Kernel; best for Azure/Enterprise environments |

A short note on AutoGen and AG2: AutoGen is the framework originally released by Microsoft Research. AG2 is a community-driven fork that emerged after part of the original team moved on. Both are active projects with overlapping but distinct roadmaps. Mentioning them as one product is a common mistake worth avoiding.

LangGraph: the stateful orchestrator

Built by the LangChain team, LangGraph treats agent workflows as directed graphs. It supports cycles and persistent state, which is useful for tasks that need revision steps or human-in-the-loop approval. It is one of the more mature options for high-reliability enterprise agents.

CrewAI: the role-playing framework

CrewAI puts the focus on the role and mission of each agent. By describing agents in natural language (“you are a senior security auditor with 15 years of experience”), developers lean on the model’s existing knowledge to shape behavior without much custom code. This makes it accessible for non-technical stakeholders, which is why it shows up often in AI workshops.

Steps to orchestrate a specialized AI team

Deploying a multi-agent system well needs a clear method. Otherwise the digital workforce drifts away from the actual business goals.

Step 1: Task decomposition and role definition

Pick a high-value, repetitive workflow. Break it down into smaller steps and assign each one to an agent persona. For a customer support automation project, that might look like:

- Triage agent: categorizes incoming tickets.

- Knowledge retrieval agent: queries internal documentation.

- Drafting agent: writes the response in the brand voice.

- Compliance agent: checks that no sensitive data is included.

Step 2: Defining communication protocols

Decide how agents share information. Will they use a shared memory bank, or pass structured JSON between each other? Clear hand-off rules prevent the “broken telephone” effect, where context gets lost between agents.

Step 3: Tool integration and API access

Agents are only as useful as the tools they can reach. Connect them to internal data sources such as your CRM, ERP, or Slack through tools or plugins. In 2026, the Model Context Protocol (MCP) has become the industry standard for this layer. Originally introduced by Anthropic and now adopted by OpenAI, Microsoft, and most major framework vendors, MCP gives agents a common way to discover and call tools across systems, instead of every team building bespoke integrations. Using MCP servers for your CRM, ERP, or knowledge bases means you build the connection once and reuse it across different agents and frameworks.

The choice of which systems to connect, and how to handle authentication and permissions, often becomes the most time-consuming part of the build. It is also where most production issues originate, so it deserves careful design rather than being treated as plumbing.

Step 4: Implementing guardrails and human-in-the-loop

To prevent autonomous errors, add checkpoints. An agent might be allowed to draft a contract, but a human manager has to approve it before an email agent is allowed to send anything. This is a core element of responsible AI implementation and a topic we go into in more depth during custom AI development projects.

Business benefits of multi-agent systems

Multi-agent architectures offer real advantages over single-agent setups, although the size of the gains depends heavily on the use case.

- Lower coordination overhead: end-to-end workflows like marketing campaigns or onboarding flows can run with much less manual coordination, although exact savings vary per organization.

- Higher accuracy through self-correction: when one agent reviews the work of another, hallucinations and errors get caught before the final output leaves the system.

- Modular maintenance: when a regulation changes, you update the prompt or tools for the compliance agent. You do not have to redesign the whole system.

- Better auditability: because each step is handled by a defined agent, you get a clearer audit trail than with a single black-box model.

Comparison of single-agent vs. multi-agent systems

| Feature | Single-agent system | Multi-agent system (MAS) |

|---|---|---|

| Complexity handling | Limited; prone to errors in long sequences | High; tasks split into manageable units |

| Error handling | If the agent fails, the task ends | Built-in redundancy and self-correction |

| Cost to run | Lower per request | Higher (multiple LLM calls), but often higher ROI |

| Development time | Fast (single prompt) | Longer (orchestration design needed) |

| Transparency | Low (black-box reasoning) | Higher (audit trail of agent interactions) |

Conclusion: the digital workforce in practice

Multi-agent AI systems are one of the more practical applications of agentic AI today. By moving from generalist models to coordinated teams of specialists, organizations can automate workflows that were previously too complex for a single model to handle reliably. Whether the goal is managing a software development cycle or automating cross-departmental operations, the ability to orchestrate AI agents is becoming a meaningful capability for many organizations.

For organizations that want to see how this works in practice, an AI demo is usually the most concrete starting point.

Frequently asked questions

What is the main advantage of multi-agent systems over single LLMs?

Specialization. A single LLM tends to lose focus when given a complex, multi-step prompt. Multi-agent systems split the task into smaller, focused roles, which usually leads to better accuracy, better tool use, and the ability for agents to check each other’s work.

Are multi-agent systems more expensive to operate?

In raw token usage, yes. A multi-agent workflow involves several LLM calls, one for each agent’s reasoning and communication. In 2026, agentic workflows typically consume 10x to 15x more tokens than a single prompt, which makes cost optimization a real concern at scale. The most common way to manage this is to use Small Language Models (SLMs) for specialized worker agents that handle narrow, well-defined tasks (such as classification, extraction, or routing), and reserve larger frontier models for the orchestrator or for reasoning-heavy steps. This hybrid setup keeps quality high where it matters and cuts costs where it does not. The trade-off against the higher token spend is reduced manual work and better output quality, which often produces a higher overall ROI.

Can multi-agent systems work with my company’s private data?

Yes. Through custom AI development, agents can be connected to internal databases using Retrieval-Augmented Generation (RAG). RAG retrieves relevant chunks of your internal documents at query time and passes them to the agent, so your data stays under your control and is not used to train the underlying public model.

How do I prevent agents from getting stuck in loops?

Frameworks like LangGraph let you set max iterations or recursion limits. A manager agent or a human-in-the-loop checkpoint also helps make sure the system stops once a goal is reached, or when it fails to make progress after a set number of attempts.