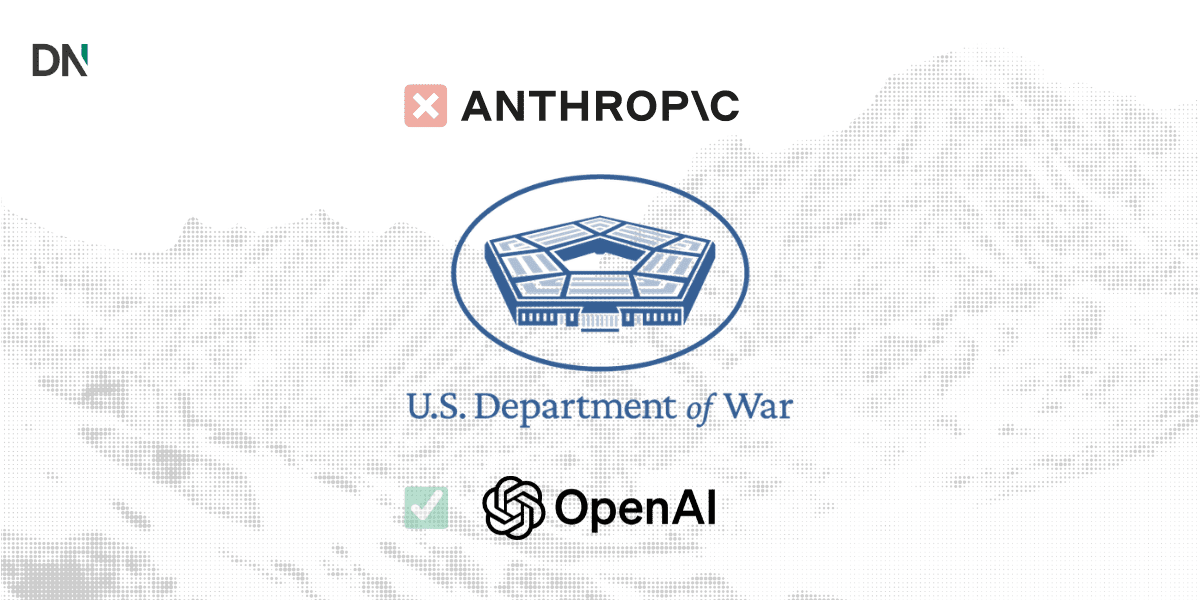

On February 28, 2026, the defense technology landscape underwent a rapid shift as OpenAI reached a landmark agreement to deploy its AI models on United States military classified networks.

The deal was finalized just hours after the Trump administration ordered federal agencies to cease using technology from rival firm Anthropic. This transition marks a pivotal moment for the Department of War (formerly the Department of Defense) as it moves to integrate frontier AI into national security operations while navigating the diverging ethical frameworks of Silicon Valley’s leading labs.

The Anthropic impasse and supply chain fallout

The fallout between the Pentagon and Anthropic reached a breaking point following a public dispute over AI safety guardrails. Anthropic, the developer of the Claude model series, had insisted on contractual prohibitions against using its technology for mass domestic surveillance and fully autonomous weapons systems.

On February 27, Defense Secretary Pete Hegseth officially designated Anthropic a “supply-chain risk to national security.” Hegseth argued that the company’s refusal to allow “all lawful use” of its models was incompatible with American defense principles.

Key developments in the Anthropic-Pentagon split:

- Contract termination: The Pentagon moved to cancel its $200 million agreement with Anthropic.

- Supply chain blacklist: The “risk” designation effectively prohibits defense contractors from conducting commercial activity with Anthropic.

- Transition period: Federal agencies have been granted a six-month grace period to phase out current Claude deployments.

- Legal challenge: Anthropic CEO Dario Amodei has signaled the company will challenge the designation in court, calling the move “legally unsound.”

OpenAI steps in with “layered” safety stack

Following the breakdown of the Anthropic negotiations, OpenAI CEO Sam Altman announced that his company had struck an agreement to provide AI capabilities to the military’s classified environments. While the move has drawn criticism from some industry observers regarding its timing, Altman framed the deal as a way to “de-escalate” tensions between the government and the AI sector.

OpenAI’s agreement includes what it calls a “multi-layered safety stack.” According to company statements, OpenAI will deploy its models via a cloud-only architecture, allowing the firm to retain technical control and ensure models are not integrated directly into “edge” devices, such as autonomous drones.

OpenAI’s negotiated “red lines”:

- No mass domestic surveillance: Explicit prohibitions on using the technology for unconstrained monitoring of U.S. persons.

- No autonomous lethality: Human responsibility is required for the use of force; models cannot independently direct weapons systems.

- No high-stakes automated decisions: Prohibitions on “social credit” style scoring or automated judicial decisions.

- Forward-deployed engineers: Cleared OpenAI personnel will assist in overseeing model performance and safety within the Department of War.

Strategic implications for the defense AI ecosystem

The Pentagon’s stance against Anthropic serves as a significant signal to other frontier labs. By utilizing the Defense Production Act and supply chain risk designations, the administration has indicated it will prioritize operational flexibility and the “all lawful use” standard in its procurement process.

For the military, the switch to OpenAI provides access to the latest generative capabilities. However, the move has sparked intense debate within Silicon Valley. Hundreds of employees at both OpenAI and Google reportedly signed letters in support of Anthropic’s original stance, highlighting a growing rift between corporate leadership and technical staff over the ethics of military AI.

Industry experts note that this development will likely accelerate the migration of classified workflows to OpenAI’s GPT series, while other providers like xAI and Google continue to navigate their own negotiations with the Department of War.

For more information on this subject please go to the official statement by Anthropic