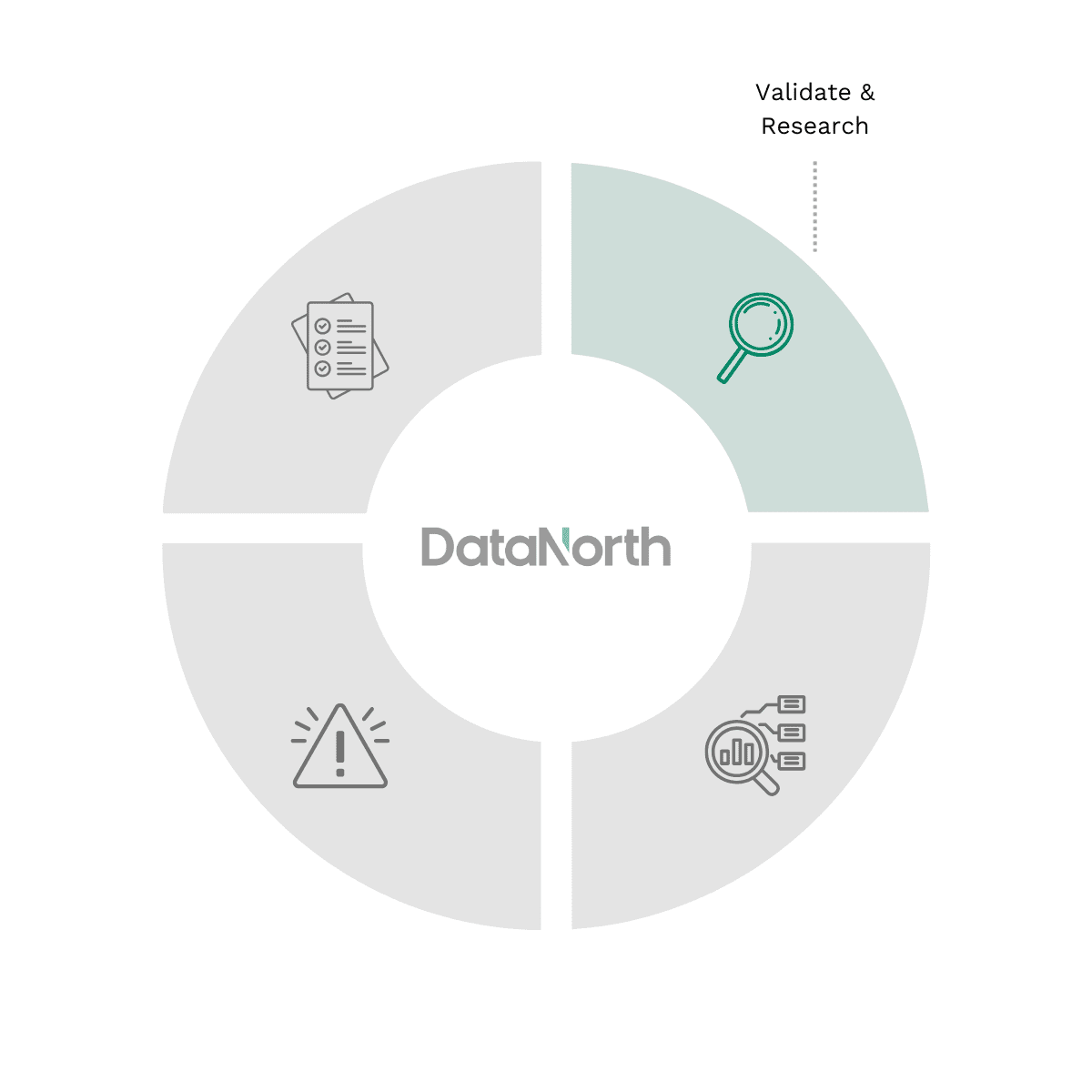

How the Vendor Risk Assessment Works

At DataNorth, our Vendor Risk Assessment ensures your AI adoption is secure and compliant through a four-step methodology: we validate your specific security requirements and data sensitivity, identify the vendor’s technical architecture and sub-processor dependencies, perform a rigorous risk audit of their data lineage and model transparency, and provide a final consultation with a risk-adjusted roadmap.

Let’s unlock your organisations potential in becoming AI-first!

Validate & Research

- Interviews: Engaging with technical leads and business owners to define specific functional requirements.

- Capability auditing: Assessing your internal team’s expertise and your current data infrastructure.

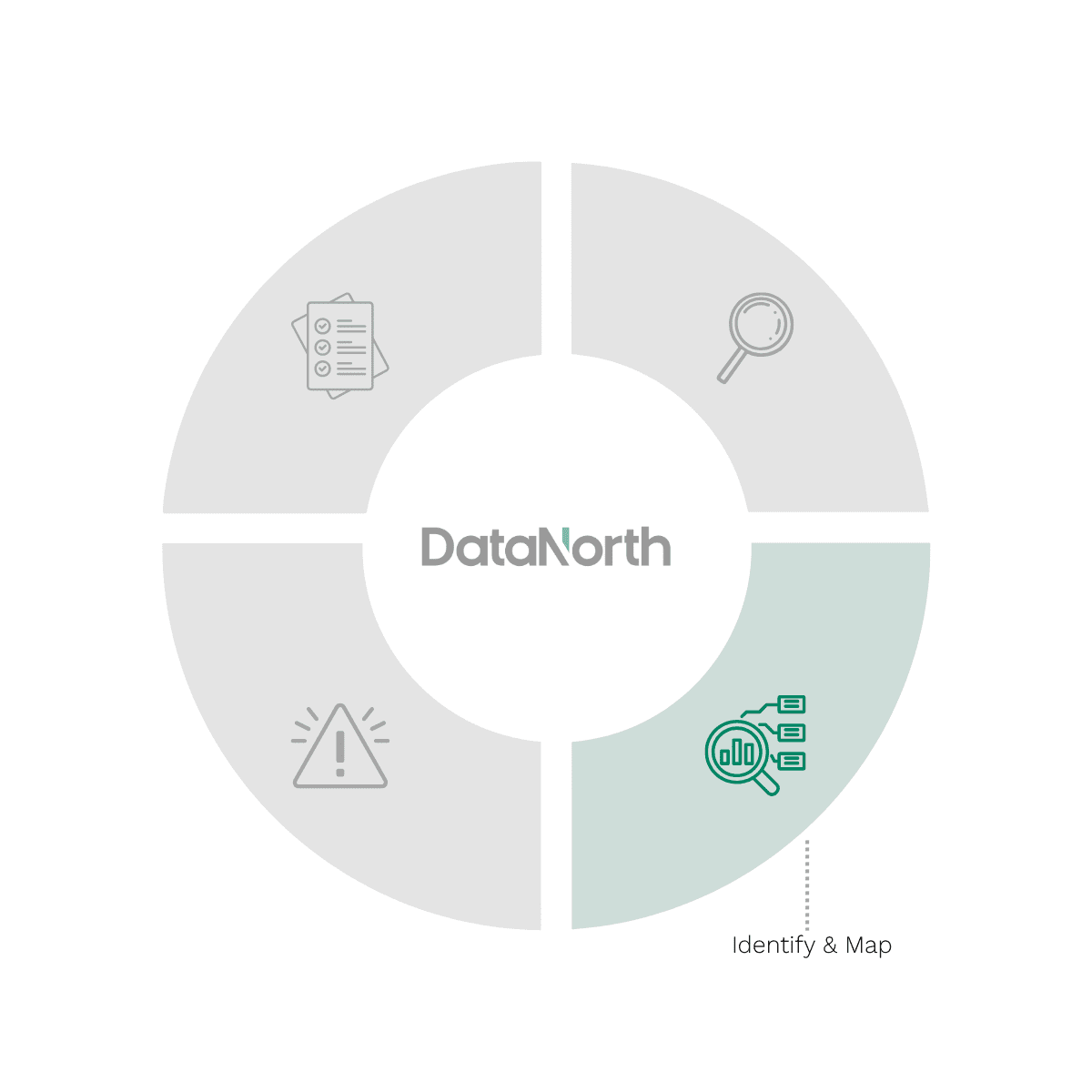

Identify & Map

-

Architectural mapping: Deconstructing the vendor’s tech stack to identify “Shadow AI” and hidden third-party sub-processors.

-

Requirement matching: Evaluating how a vendor’s model performance aligns with your specific operational and scalability goals.

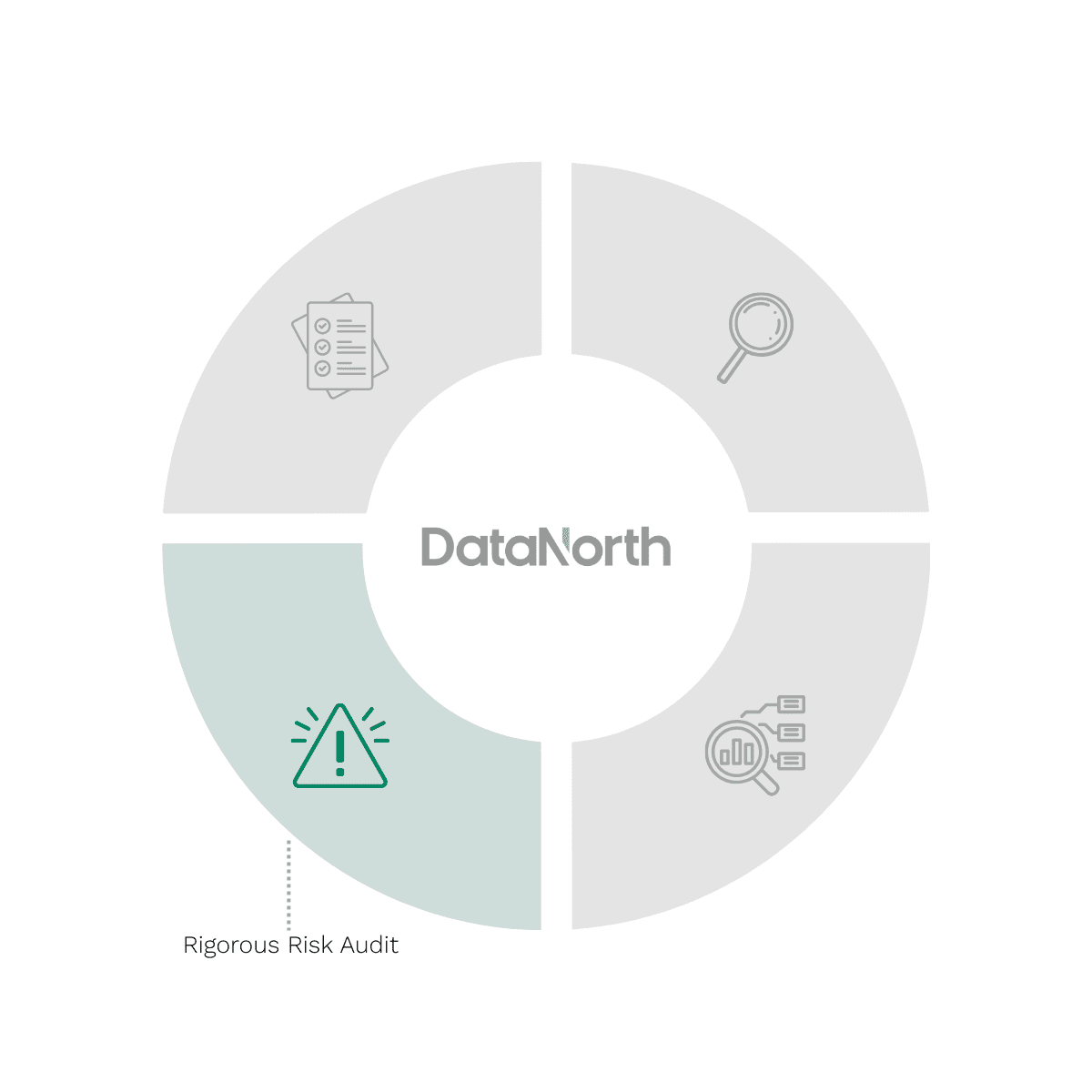

Rigorous Risk Audit

-

Data lineage review: Investigating the source of training data to ensure it is free from PII and copyrighted material.

-

Model stress testing: Auditing the vendor’s “Red Teaming” results for bias, hallucinations, and resistance to prompt injection.

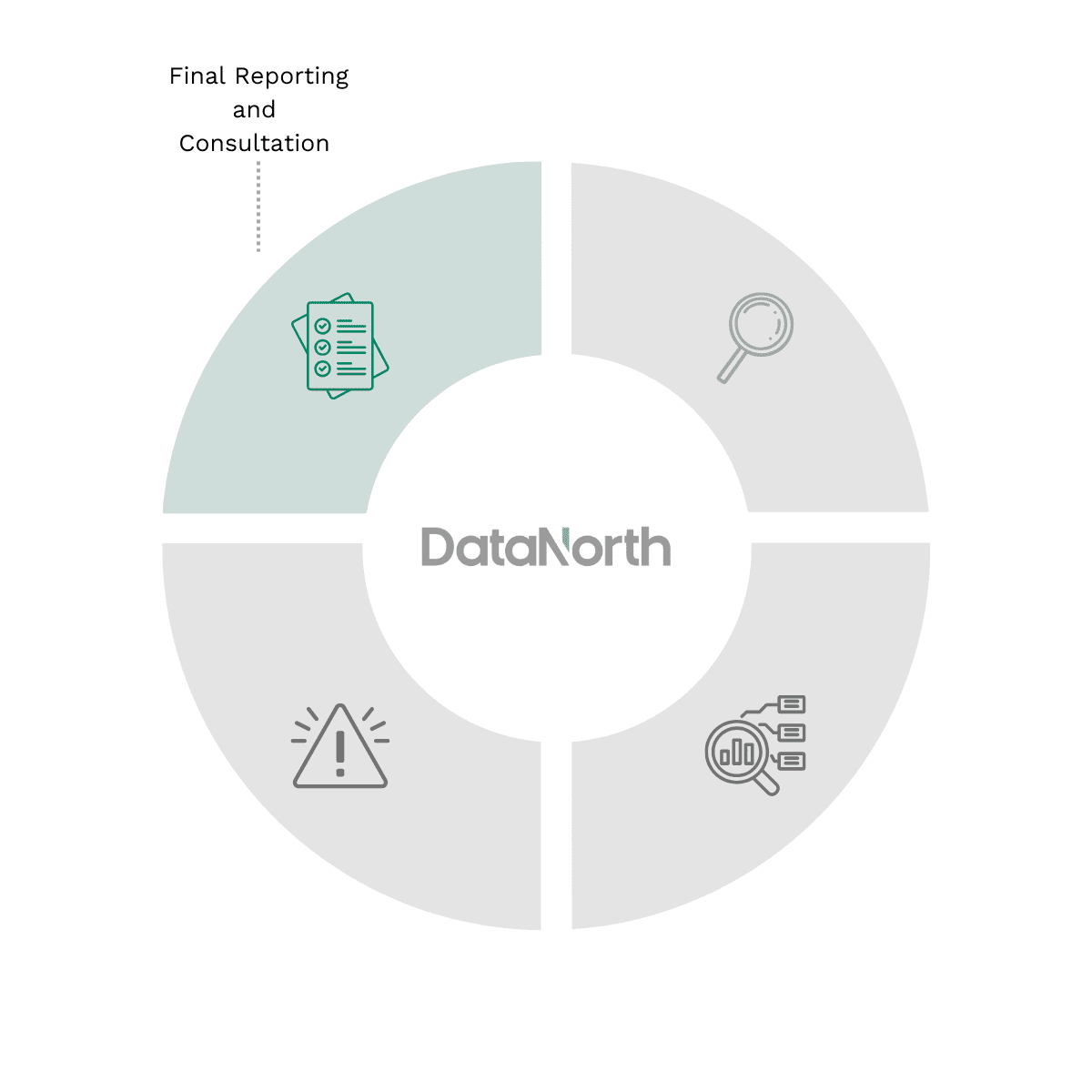

Final reporting & Consultation

-

Risk-adjusted roadmap: Providing a final breakdown of vendor viability vs. long-term compliance liabilities.

-

Implementation guidance: Delivering clear, actionable insights to ensure your chosen AI architecture supports sustainable growth.

"The assessment sparked creative ideas, resulting in 55 actionable AI use cases."

Gielis Dijk

IT Manager @ Omrin

Why Choose DataNorth?

9+ Years of AI Experience

Highly Educated AI Experts

We give 100% Honest Advice

Get Your Vendor Risk Assessment

20-hour Consultancy Package

Our AI experts are available for 20 hours to address your questions

Experts available in Dutch, English, and German

Both on-location and digital options available

The DataNorth Vendor Risk Assessment

Within 2 weeks, receive an extensive report about your Vendor Risks

Receive a risk and compliance breakdown of your AI vendor ecosystem.

Gain insight into the data sovereignty, model transparency, and long-term security liabilities of your AI vendor landscape.

AI Vendor Risk Training & Workshop

AI Vendor Risk training & workshop for 10 employees. Tailored to your organization

Both on-location and digital options available