Synthetic data is information that is artificially generated by computer algorithms rather than being collected from real-world events or individuals. In 2026, Gartner forecasts that 75% of businesses will utilize generative AI to produce synthetic customer data, a significant increase from less than 5% in 2023. This technology functions by training generative models on original datasets to learn their statistical patterns, correlations, and structural properties, subsequently creating entirely new data points that maintain the utility of the original without containing personally identifiable information (PII).

The primary driver for the adoption of synthetic data is the growing friction between the need for large datasets to train AI models and the increasing stringency of global privacy regulations like the GDPR and the EU AI Act. Organizations use synthetic data to bypass traditional data masking limitations, accelerate development cycles, and simulate rare “edge case” scenarios that are underrepresented in real-world environments.

When to use synthetic data

The decision to implement synthetic data over real-world data depends on the specific requirements of the project, including privacy constraints, data availability, and the need for controlled experimentation.

Privacy and regulatory compliance

Synthetic data is utilized when strict privacy regulations prevent the use of production data for testing or model training. Unlike anonymization or pseudonymization, which can often be reversed through linkage attacks, high-quality synthetic data has no one-to-one mapping to real individuals. This makes it a primary tool for sectors such as healthcare and finance, where data sharing is heavily restricted. If your organization requires a privacy assessment to evaluate these risks, synthetic data often emerges as a recommended mitigation strategy.

Data scarcity and edge case simulation

In many machine learning applications, real-world data is imbalanced. For example, in fraud detection, legitimate transactions vastly outnumber fraudulent ones. Synthetic data generation allows engineers to create millions of samples for these rare events, improving the model’s ability to recognize patterns it would otherwise rarely see. This is also critical for autonomous systems, such as self-driving vehicles, where simulating dangerous or rare road conditions is safer and more cost-effective than real-world testing.

Rapid prototyping and internal sharing

Data access requests within large enterprises can take weeks or months due to security approvals. Synthetic datasets can be shared freely between departments without the same level of administrative overhead. This enables teams to start building applications immediately while waiting for formal access to production systems.

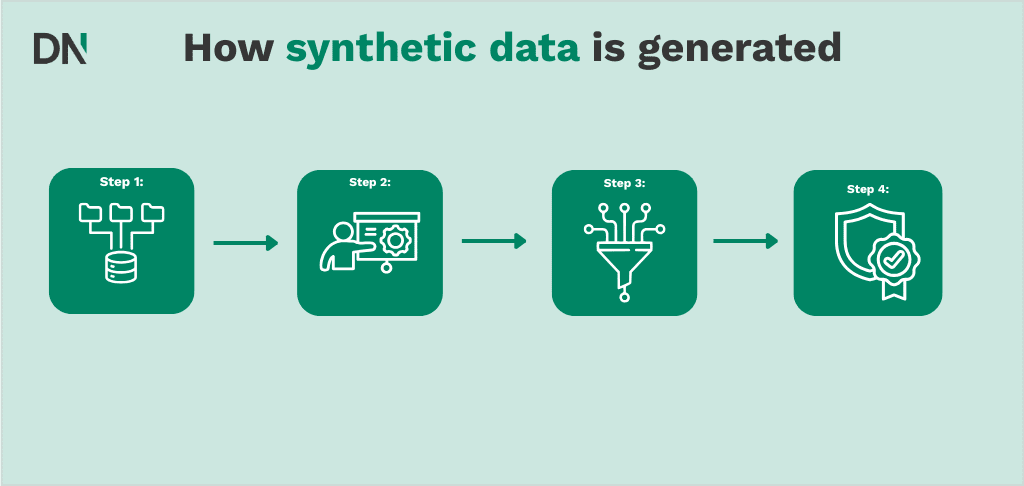

How synthetic data is generated

The technical process of generating synthetic data involves several distinct phases, ranging from initial data analysis to final utility validation.

Step 1: Data ingestion and analysis

The process begins with an input “seed” dataset. The generation algorithm analyzes the metadata, schema, and statistical distributions of this data. This includes identifying the types of variables (categorical, numerical, or time-series) and the complex relationships between them. For instance, in a retail dataset, the algorithm must learn that “purchase date” and “delivery date” have a logical temporal relationship.

Step 2: Training the generative model

Different mathematical approaches are used depending on the data type:

- Generative Adversarial Networks (GANs): These consist of two neural networks, a generator and a discriminator that compete against each other to produce increasingly realistic data.

- Variational Autoencoders (VAEs): These compress the input data into a lower-dimensional representation and then reconstruct it, allowing for the generation of new, similar data points.

- Diffusion Models: Frequently used for unstructured data like images, these models learn to reverse a process of adding noise to data.

Step 3: Synthesis and privacy filtering

Once trained, the model generates new data. A critical sub-step is the application of differential privacy or other privacy filters. These filters ensure that the model has not simply “memorized” specific rows of the original data (overfitting), which would result in a privacy breach. If your team is unsure how to configure these parameters, an AI workshop can provide the technical foundation needed to manage these algorithms.

Step 4: Quality assurance and utility testing

The final synthetic output is compared against the original data using statistical metrics. This automated QA process checks for “utility,” ensuring that a machine learning model trained on the synthetic data will perform similarly to one trained on real data.

Comparative analysis of synthetic data platforms

The market for synthetic data platforms in 2026 is categorized by the type of data they specialize in (structured vs. unstructured) and their integration capabilities.

| Platform | Specialization | Key features | Target use case |

|---|---|---|---|

| Gretel.ai | Multi-modal (Text, Tabular, Relational) | APIs for developers, privacy-as-code, and support for complex relational databases. | DevOps, AI training, and data sharing. |

| Mostly.ai | Structured/Tabular Data | High-fidelity reproduction of behavioral and time-series data. | Banking, insurance, and telecommunications. |

| Tonic.ai | Database Synthesis | Subsetting, de-identification, and synthesis across multiple interconnected databases. | Software testing and QA environments. |

| NVIDIA NeMo | Agentic AI & Unstructured Data | Text-based synthetic data for training LLMs and autonomous agents. | Robotics, conversational AI, and research. |

| Synthesis AI | Computer Vision | Generation of photorealistic human images and 3D environments. | Biometrics, security, and virtual reality. |

Choosing the right platform often requires a thorough assessment to determine which tool maintains the highest utility for your specific data distributions.

Limitations and technical considerations

While synthetic data offers significant advantages, it is not a direct replacement for real data in all scenarios.

- Model collapse: If AI models are trained repeatedly on synthetic data without fresh real-world inputs, they can begin to lose the “tail” of the distribution, leading to a reduction in diversity and accuracy.

- Bias amplification: If the seed data contains historical biases (e.g., gender or racial bias in hiring), the synthetic generator will likely reproduce and potentially amplify these biases. Careful auditing is required.

- Complex outliers: Rare but critical outliers in the real world may be filtered out as noise by the generative model, which can be problematic in fields like medical diagnostics where the outlier is the most important data point.

For organizations navigating these complexities, an AI implementation partner can help design hybrid workflows that combine real and synthetic data to mitigate these risks.

Conclusion

Synthetic data has transitioned from a niche privacy tool to a fundamental component of the enterprise AI stack in 2026. By enabling organizations to bypass privacy bottlenecks, simulate rare scenarios, and accelerate development, it provides a scalable solution to the global data shortage. However, the successful use of synthetic data requires a rigorous approach to utility validation and bias management.

Frequently Asked Questions

Is synthetic data considered personal data under the GDPR?

Generally, no. If the synthetic data is generated such that it does not relate to an identified or identifiable natural person and cannot be traced back to the original subjects, it is considered anonymous information and falls outside the scope of the GDPR. However, the process of generating it often involves processing real personal data, which must still comply with legal requirements.

Can synthetic data be used to train Large Language Models (LLMs)?

Yes. Synthetic data is increasingly used to fine-tune LLMs, especially for domain-specific tasks where real conversational data is scarce or sensitive. This is a core component of a custom AI solution for enterprise environments.

How do I measure the quality of synthetic data?

Quality is typically measured through two lenses: Utility and Privacy. Utility is measured by comparing the statistical distributions of the synthetic and real data, or by checking if a model trained on synthetic data achieves similar accuracy on a real-world test set. Privacy is measured through re-identification risk assessments.

What is the difference between synthetic data and data masking?

Data masking (or de-identification) modifies existing records by removing or obscuring specific fields. Synthetic data, however, creates entirely new records from scratch. Synthetic data typically offers higher privacy protection and better preserves the statistical integrity of complex datasets than traditional masking.