In today’s dynamic digital landscape, businesses are constantly looking for innovative technologies to elevate operational efficiency and customer experiences. Among the groundbreaking advancements in Artificial Intelligence, Retrieval-Augmented Generation (RAG) stands out as a true game-changer.

This blog dives deep into Retrieval-Augmented Generation, exploring its mechanisms, its 2026 evolution into Agentic AI, and practical applications for businesses looking for cutting-edge solutions.

So let’s get started!

What is RAG?

Retrieval-Augmented Generation, or RAG for short, is like a super-powered research assistant for your AI systems, enhancing the applicability of large language models by integrating them with your organization’s specific, real-time knowledge bases.

So how does it actually work? Well, RAG combines the strengths of two key components: A retriever and a generator.

The retriever acts as a seeker, pulling relevant information from your database. While this used to be focused heavily on simple Vector Databases, now this has shifted toward “Hybrid Retrieval” and “GraphRAG.” This means the retriever doesn’t just look for “similar words”; it understands the complex relationships between your data points (e.g., how a specific client relates to a specific project and a specific contract).

The generator then leverages this retrieved information to craft accurate and contextually aware responses. The process begins with a user query. The retriever, like a skilled librarian, receives the user prompt and searches through a large knowledge base to locate relevant documents. Then a “Reranker” step is added, where a secondary AI double-checks the search results to ensure only the most high-quality data reaches the generator. This retrieved information, combined with pre-trained models, fuels the generator to create a response that is both insightful and reliable.

This dual approach offers several advantages:

- Minimized error rates: By grounding responses in facts from retrieved information, RAG significantly reduces the risk of hallucinations. The “Self-Correction” loops allow the AI to fact-check its own answer against the source before the user ever sees it.

- Enhanced contextual understanding: RAG excels at understanding nuances. With the advent of Multimodal RAG, systems can now “read” charts, images, and technical diagrams alongside text to provide a complete answer.

- Up-to-date relevance: By integrating the latest information, RAG ensures responses remain factually accurate without having to retrain a whole AI model, a process that is still too slow and expensive for modern business needs.

- Privacy & Access control: Unlike the “black box” models of the past, 2026 RAG systems are built with Role-Based Access Control (RBAC), ensuring the AI only retrieves information the specific user is authorized to see.

Why use RAG?

Retrieval-Augmented Generation is a significant advancement in Natural Language Processing (NLP). By combining the strengths of retrieval-based models and generative models, RAG enhances the overall quality and accuracy of generative AI responses.

Addressing LLM limitations

Traditional LLMs are limited to the knowledge they were trained on, which quickly becomes outdated. RAG addresses this by allowing LLMs to access and integrate up-to-date information from external sources. This is often handled via “Agentic RAG,” where the AI can decide on its own to go search for more info if it realizes its current knowledge isn’t enough to answer a complex question.

Reducing hallucinations

LLMs can sometimes generate “plausible but incorrect” information. RAG mitigates this by grounding responses in factual, retrieved data. This “grounding” is the primary way businesses today achieve the 99%+ accuracy required for legal or medical applications.

Cost-effectiveness

RAG allows for the integration of new information without the need to retrain the entire model. Even as “Long Context” models (models that can read 1 million+ tokens at once) have become common, RAG remains the most cost-effective way to manage massive, multi-terabyte company archives.

Versatility and adaptability

RAG can be applied across various domains, from healthcare to finance. Its ability to provide contextually relevant information makes it a versatile tool for any organization that relies on deep documentation.

Enhancing user trust

By providing verifiable citations and sources for the information used in responses, RAG builds user trust. Users can verify the accuracy of the information, which is particularly critical under the EU AI Act guidelines, which mandate transparency in high-stakes AI interactions.

Transforming Businesses: Benefits of RAG

Improving customer service accuracy

Customer service bots deliver timely, relevant, and personalized responses. Today’s bots are “Agentic,” meaning they don’t just answer questions; they can retrieve a customer’s specific order history and solve a problem in real-time. Research indicates that 75% of customers stay loyal to companies offering excellent support, driving businesses to move beyond simple chatbots.

Streamlining content personalization

For content-driven businesses, RAG is a game-changer. Content personalization, based on real-time user data and behavior, leads to increased engagement. RAG empowers you to create marketing campaigns or product recommendations that feel “human-curated” because they are grounded in the user’s actual history.

Minimizing Errors and Enhancing Decision-Making

By utilizing big datasets, RAG minimizes errors in data processing. The enhanced decision-making support provided by GraphRAG, which maps out organizational connections, paves the way for better business outcomes in complex fields like global logistics or drug discovery.

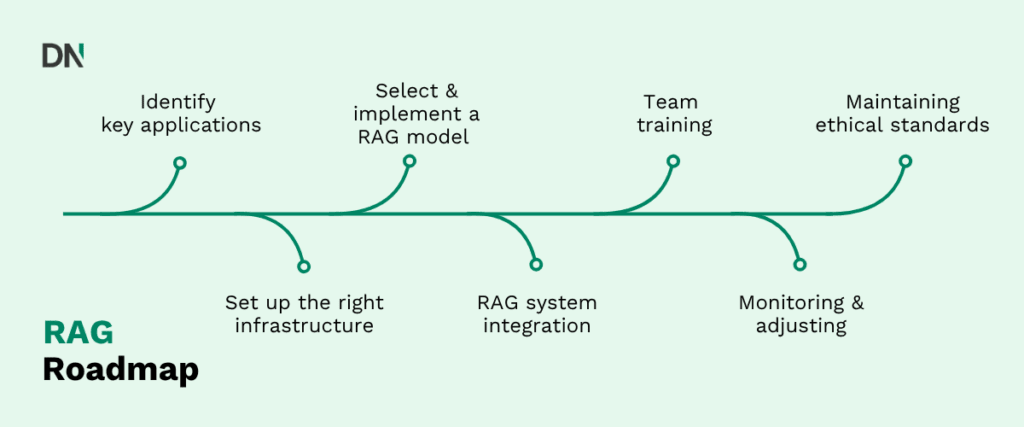

Implementing RAG: A 2026 Practical Roadmap RAG implementation:

1. Identify key applications

Assess where RAG can deliver the most value.

- Internal knowledge base: Turning thousands of internal PDFs into a searchable “Company Brain.”

- Regulatory compliance: Using RAG to monitor and cross-reference new laws against company policy.

2. Set up the right infrastructure

While high-performance servers are great, the focus has shifted to Serverless Vector Data and Model Context Protocols (MCP). These allow your AI to “talk” to your data where it already lives (SharePoint, Google Drive, SQL) without needing to move it to a new location.

3. Select and implement a RAG strategy

Choose a strategy that aligns with your data:

- Hybrid search: Essential for standard text documents.

- GraphRAG: Ideal for scenarios requiring precise relationship mapping, like legal research or supply chain management.

- Multimodal RAG: Necessary if your data includes images, blueprints, or video.

4. Integrate RAG into your systems

- API Integration: Seamlessly connect RAG capabilities with your existing CRM or ERP.

- Agentic Workflows: Set up your system so the AI can “think” and perform multiple searches to find the right answer.

5. Train your team

Equip your team with “AI Literacy.” Technical staff need to understand Retrieval Evaluation, while operational staff need to learn how to prompt the AI to get the best results from the retrieved data.

6. Monitor and adjust (RAGOps)

Implement “RAGOps” to track effectiveness. Regularly review metrics like “Faithfulness” (is the AI sticking to the facts?) and “Relevance” (is the retrieved info actually helpful?).

7. Maintain Ethical & Legal standards

Ensure your RAG deployment adheres to the latest AI guidelines. In 2026, this includes Data Sovereignty (ensuring your data stays in its home region) and Transparency (disclosing when an answer is AI-generated).

Final thoughts: Embracing the future with RAG

As technology advances, integrating sophisticated tools like RAG becomes increasingly crucial for businesses seeking transformative growth. RAG empowers businesses to stay relevant, become more responsive, and build resilience in an AI-first world.

If you are looking to implement RAG solutions in your business you may want to check out our RAG Readiness Assessment service, where we align your organisation with the best practices to get the best results out of your tools. If you are already looking to develop a specific tool that implements RAG then we can assist with that also, for that you can have a look at our AI Development service.

Frequently asked questions (FAQ) on RAG

Is RAG still necessary now that “Long Context” models (1M+ tokens) are common?

Yes. While models like Gemini 3.0 can “read” an entire archive in one go, RAG remains superior for three reasons:

Accuracy: LLMs still suffer from “Lost in the Middle” bias. RAG ensures the most relevant data is placed exactly where the model’s attention is sharpest.

Cost: RAG is roughly 1,250x cheaper per query. Processing 1 million tokens every time you ask a simple question is financially unsustainable at scale.

Speed: RAG delivers sub-second responses, whereas saturating a massive context window can lead to 30–60 second latencies.

What is the difference between Vector Search and GraphRAG?

Standard Vector Search finds information based on “semantic similarity” (e.g., finding documents about “cars” when you search for “vehicles”). GraphRAG maps the actual relationships between entities (e.g., “Part A” is “manufactured by” “Company B” which is “located in” “Region C”).

Key Rule: Use Vector Search for simple lookups. Use GraphRAG when your questions require multi-step reasoning across your data (e.g., “Which of our suppliers are affected by the new regulations in France?”).

How does “Agentic RAG” differ from a standard AI chatbot?

A standard chatbot is reactive; it retrieves info and summarizes it. Agentic RAG is proactive. If the AI realizes the first search didn’t provide a complete answer, it can autonomously decide to:

- Search a different database.

- Browse the web for real-time news.

- Cross-reference two different documents to find a discrepancy. It essentially “thinks” through the research process rather than just performing a single keyword search.

What is the typical ROI for a RAG implementation in 2026?

Most enterprises see ROI in three main areas:

Zero Retraining Costs: You can update your AI’s knowledge instantly by adding a file to your database, avoiding the multi-million dollar costs of fine-tuning a model.

Reduced “Time-to-Insight”: Analysts spend 60% less time hunting for data across siloed PDFs and emails.

Lower Support Costs: Agentic bots can resolve 70-80% of Tier 1 queries by accessing order histories and manuals directly.

How does RAG help with EU AI Act compliance?

Under the EU AI Act (fully enforceable as of August 2026), high-risk AI systems must be transparent and explainable. RAG provides this “by design” through Citations. Because the AI pulls from specific documents, it can provide a direct link or footnote to the source material, allowing a human-in-the-loop to verify the answer.

How is data privacy handled in a RAG system?

In 2026, we use Role-Based Access Control (RBAC). When a user asks the AI a question, the retriever first checks that user’s credentials against the company’s permissions (e.g., SharePoint or SQL access). The AI only “sees” and retrieves the data that the specific employee is authorized to view, preventing sensitive data leaks.