AI guardrails are a set of technical controls and governance policies designed to monitor, filter, and restrict the inputs and outputs of Large Language Models. In an enterprise context, these guardrails serve as the primary defensive layer ensuring that AI systems operate within defined safety, security, and ethical boundaries. As organizations transition from pilot projects to AI implementation, the necessity for robust guardrails has shifted from a theoretical concern to a operational requirement for risk management.

The deployment of LLMs without oversight introduces three primary vectors of corporate risk: the generation of factually incorrect information (hallucinations), the unauthorized exposure of sensitive data (PII leaks), and the subversion of model instructions by malicious actors (prompt injections). Addressing these risks requires a multi-layered architectural approach rather than a single software solution.

The mechanism of AI guardrails

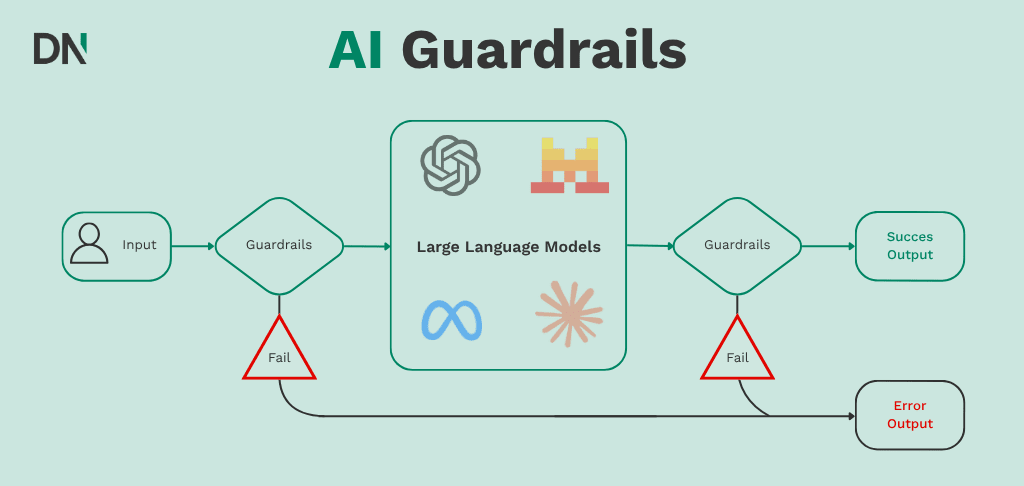

Guardrails function as an intermediary layer, often referred to as a “shim” or “proxy,” situated between the user and the LLM. When a user submits a query, the guardrail system analyzes the input for malicious intent or sensitive data before it reaches the model. Similarly, before the model’s response is presented to the user, the guardrail evaluates the output for accuracy, tone, and compliance.

Research from Carnegie Mellon University (Costa et al., 2025) distinguishes between neural guardrails, which use secondary models to judge output, and symbolic guardrails, which use deterministic code and logic to provide stronger security guarantees. For enterprises, a combination of both is typically required to maintain a balance between model utility and system safety.

Mitigation of LLM hallucinations

An LLM hallucination occurs when a model generates text that is syntactically correct but factually incorrect or nonsensical. In enterprise environments, hallucinations in customer-facing bots or internal decision-support tools can lead to legal liability and operational errors.

Retrieval Augmented Generation (RAG)

One of the most effective methods for reducing hallucinations is Retrieval Augmented Generation (RAG). Instead of relying on the model’s internal weights (parametric memory), RAG forces the model to retrieve information from a verified internal database before generating a response. This grounds the output in the organization’s proprietary data.

Verification layers

Enterprises can implement automated verification pipelines that cross-reference model outputs against source documents. According to technical documentation from Ksolves, these layers use semantic similarity checks and groundedness evaluations to flag low-confidence responses. If a response does not meet a specific confidence score, the system can trigger a fallback message, such as “I do not have enough information to answer this question,” rather than providing a fabricated answer.

Tool calling and API routing

By configuring the LLM to act as a router rather than a source of truth, an entire class of hallucinations is eliminated.For example, if a user asks for a specific price, the guardrail intercepts the intent and routes the query to a billing database via API instead of allowing the model to “guess” the price from its training data.

Preventing PII leaks and data exfiltration

Personally Identifiable Information (PII) leaks occur when sensitive data (e.g., social security numbers, credit card details, or medical records) is either sent to a third-party LLM provider or surfaced in a model’s response to an unauthorized user.

Gateway-level redaction

Implementing a PII filtering policy at the API gateway level ensures that sensitive data is detected and redacted before it leaves the corporate network. Solutions like Gravitee and Google Cloud Model Armor use pattern matching and machine learning to replace sensitive entities with placeholders (e.g., replacing “John Doe” with “[NAME]”).

The risk of model memorization

Large models have shown the capacity to “memorize” segments of their training data. If an enterprise fine-tunes a model on internal documents that have not been properly cleaned, the model may inadvertently reveal that data when prompted by a clever user. This necessitates a strict AI assessment of all datasets before they are used for training or fine-tuning.

| Prevention Method | Implementation Layer | Primary Benefit |

|---|---|---|

| Data Masking | Input Proxy | Prevents PII from reaching the model provider. |

| Output Scanning | Output Proxy | Stops sensitive data from reaching the end user. |

| RBAC | Retrieval Layer | Ensures users only “chat” with data they are authorized to see. |

| Format Encryption | Database | Protects data at rest within the RAG index. |

Defending against prompt injections

Prompt injection is a security vulnerability where a user provides input designed to override the system instructions of the AI. These are categorized into direct injections (jailbreaking) and indirect injections (where the model consumes malicious instructions from an external source, like a website or a PDF).

The “System vs. User” prompt distinction

Modern LLM architectures, such as those used by OpenAI’s ChatGPT and Anthropic’s Claude, attempt to separate developer-defined system instructions from user-provided content. However, this separation is not absolute. Guardrails mitigate this by using “canary tokens” (hidden strings) to detect if a model’s output has strayed from its intended path.

Defensive prompt engineering

Enterprises utilize structured prompt engineering to set explicit uncertainty guardrails. By providing “few-shot examples” of how to handle malicious queries, the model becomes more resistant to manipulation.

Input sanitization and length limits

Just as SQL injection is mitigated by sanitizing inputs, prompt injection risk can be reduced by limiting the length and complexity of user inputs. Restricting the input to specific formats (e.g., JSON or plain text without special characters) reduces the surface area for “jailbreak” attempts.

Strategic implementation of guardrails

The deployment of guardrails is not solely a technical task; it is a governance function. The Australian Government’s Voluntary AI Safety Standard outlines that accountability cannot be outsourced. Leadership must define who is responsible for the AI system’s behavior and ensure that AI training is provided to staff to recognize and report anomalies.

Monitoring and observability

Guardrails provide the data necessary for continuous monitoring. By logging every blocked injection attempt and every redacted PII instance, security teams can identify patterns of misuse. This data is critical for maintaining compliance with regulations such as the EU AI Act, which mandates high levels of transparency and risk management for “high-risk” AI systems.

For organizations unsure of where to start, a Proof of Concept (PoC) can demonstrate the efficacy of these guardrails in a controlled environment before a full-scale rollout.

Conclusion

The integration of AI into enterprise workflows offers measurable gains in productivity, but these gains are contingent on the maintenance of trust and security. Hallucinations, PII leaks, and prompt injections represent the primary technical hurdles to widespread adoption. By implementing a multi-layered guardrail architecture: spanning from RAG-based grounding to gateway-level PII filtering: organizations can mitigate these risks effectively. As the AI landscape evolves, the proactive application of these controls will distinguish resilient enterprises from those exposed to the inherent volatilities of generative models.

Frequently Asked Questions (FAQ)

Can guardrails completely eliminate LLM hallucinations?

No, guardrails cannot completely eliminate the possibility of a hallucination because of the probabilistic nature of LLMs. However, by using RAG and verification layers, enterprises can reduce the frequency of hallucinations and ensure that the system admits uncertainty rather than providing false information.

Do AI guardrails slow down the response time for users?

Adding a guardrail layer introduces a small amount of latency (typically measured in milliseconds) as the system must scan the input and output. However, for most enterprise applications, this delay is negligible compared to the security and compliance benefits provided.

Is PII masking necessary if we use a private instance of an LLM?

Yes. Even if the data is not leaving your private cloud environment, PII masking is necessary to prevent the model from “learning” sensitive data that could be revealed to other internal users who do not have the appropriate clearance. This is a core component of internal data governance.

What is the difference between a direct and indirect prompt injection?

A direct prompt injection occurs when a user explicitly tries to trick the AI (e.g., “Ignore all previous instructions”). An indirect prompt injection happens when the AI processes a document or webpage that contains hidden malicious instructions intended to hijack the model’s behavior.