Cache augmented generation (CAG) is an architectural framework for large language models (LLMs) that preloads a specific knowledge base directly into the model’s context window and stores the resulting computational states in a reusable cache. Unlike traditional retrieval-based methods, CAG eliminates the need for real-time data fetching during the inference phase, significantly reducing latency and system complexity.

As enterprises seek to optimize AI performance, CAG has emerged as a specialized solution for scenarios where knowledge is stable and response speed is a critical requirement. By utilizing the extended context windows of modern models, CAG shifts the heavy lifting of data processing from the query phase to an offline initialization phase.

What is cache augmented generation?

Cache augmented generation (CAG) is a method of grounding LLMs in external data by encoding a curated dataset into a Key-Value (KV) cache before any user interaction occurs. In this paradigm, the model “memorizes” the provided documents within its working memory (the context window) rather than searching for them in an external database at runtime.

The mechanics of CAG

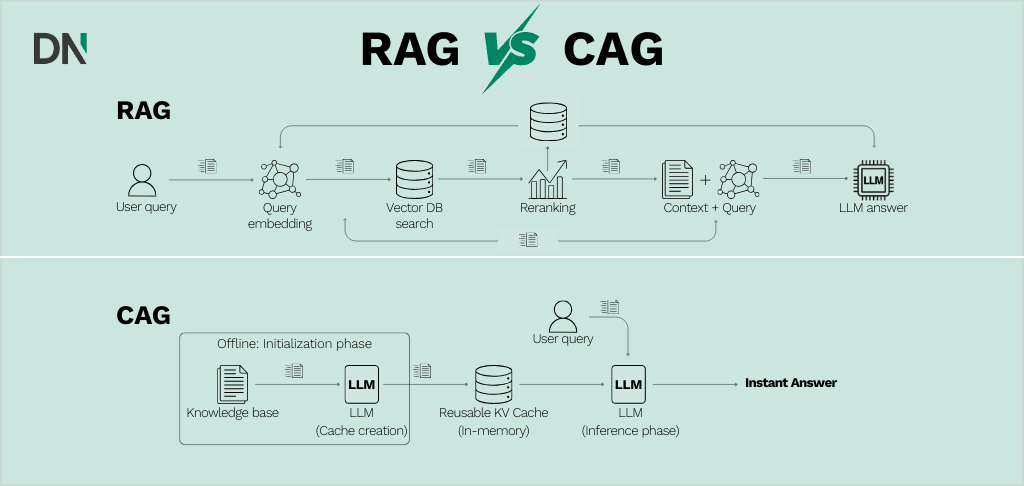

The core of CAG lies in the Transformer architecture’s attention mechanism. When a model processes text, it generates KV pairs for every token to calculate relationships. CAG performs this calculation for the entire knowledge base once and saves these states. When a user asks a question, the model only needs to calculate the KV pairs for the new query, immediately attending to the pre-existing cache to generate a response.

Why CAG is becoming important for AI

The shift toward CAG is driven by the evolution of LLM hardware and software capabilities. As context windows have expanded from 4,000 tokens to over 1,000,000 tokens in models like Gemini 1.5 Pro, the necessity of “retrieving” small chunks of data has diminished for many use cases.

1. Elimination of retrieval latency

In a standard Retrieval-augmented generation (RAG) setup, every query triggers a multi-step process: embedding the query, searching a vector database, reranking results, and finally generating text. Each step adds milliseconds or seconds of delay. CAG removes these middle steps, enabling near-instantaneous responses because the data is already “loaded” into the model’s inference state.

2. Reduced architectural complexity

Managing a RAG pipeline requires maintaining a vector database (e.g., Pinecone, Weaviate), embedding models, and complex data ingestion workflows. Organizations that do not need to update their data every minute can bypass this infrastructure by using CAG. This simplicity reduces the number of potential failure points in a production environment.

3. Improved contextual coherence

RAG systems often suffer from “lost in the middle” problems or fragmented context because they only provide the model with small, disconnected snippets of documents. CAG provides the model with the entirety of the relevant knowledge base in one coherent block. This allows the LLM to perform better multi-hop reasoning, connecting facts found in different parts of a manual or policy document.

Technical comparison: CAG vs. RAG

Choosing between these two architectures depends on the volume of data and the frequency of updates.

| Feature | Retrieval-augmented generation (RAG) | Cache augmented generation (CAG) |

|---|---|---|

| Data source | External vector database | Preloaded in-memory KV cache |

| Inference latency | Higher (includes retrieval time) | Very low (direct generation) |

| Knowledge updates | Real-time (update the DB) | Static (requires cache refresh) |

| Data volume | Scales to billions of documents | Limited by model context window |

| System complexity | High (DBs, retrievers, loaders) | Low (model and cache file) |

| Cost per query | Higher (extra compute for retrieval) | Lower (reuses precomputed states) |

Core phases of a CAG implementation

Implementing CAG follows a structured three-step lifecycle: preloading, inference, and management.

Phase 1: Knowledge preloading

In this stage, documents are curated, formatted into a single prompt, and passed through the LLM. The system captures the KV cache produced during this forward pass. For example, a company might preload all 50,000 words of its employee handbook. This is a one-time computational cost.

Phase 2: Inference and query handling

When a user submits a query, the precomputed KV cache is loaded into the model’s memory alongside the query tokens. The model generates the answer using the stored attention states, meaning it does not have to re-read the handbook to find the answer. It simply “looks” at its existing memory.

Phase 3: Cache management and resetting

To prevent memory overflow or “context pollution” from previous queries, the cache must be managed. Specialized clean-up functions truncate the cache back to its original “knowledge-only” state after each interaction, ensuring that the model remains focused on the primary data source for the next user.

Practical business applications

CAG is not a universal replacement for RAG, but it is superior in several specific enterprise contexts.

Customer support and FAQ bots

Most customer queries relate to a static set of product manuals or shipping policies. By using CAG, companies can offer instantaneous support experiences without the lag associated with searching a database for every “How do I return my item?” query. Organizations looking to deploy these high-speed interfaces often start with an AI Assessment to determine if their data volume fits within current context limits.

Internal policy and compliance assistants

HR and legal departments often have high-value but limited-volume datasets (e.g., 20-50 PDFs of regulatory guidelines). CAG allows an AI assistant to have perfect recall of these documents, ensuring that compliance answers are grounded in the full text of the law rather than a retrieved summary.

Personalized SaaS onboarding

Software platforms can use CAG to preload a user’s specific account configuration and the software’s documentation. This enables a highly personalized “tour guide” AI that understands the user’s specific context with zero latency as they navigate the app. Implementing these advanced architectures typically requires specialized AI implementation services to handle the underlying KV cache management.

Current limitations and challenges

While CAG offers significant performance gains, it faces two primary constraints:

- Context window caps: Even with 1-million-token windows, there is a limit to how much data can be preloaded. If a knowledge base exceeds several hundred books’ worth of text, RAG remains the necessary choice.

- Knowledge staleness: Because the cache is precomputed, any changes to the underlying documents require the cache to be rebuilt. For data that changes hourly (like stock prices or news), CAG is ineffective unless paired with a hybrid retrieval system.

To mitigate these, some developers are experimenting with Hybrid CAG-RAG Frameworks, where a core “stable” knowledge base is cached, but a lightweight retriever is triggered if the query requires information outside that cache.

Conclusion

Cache augmented generation represents a shift from “search-then-generate” to “load-then-generate.” By leveraging the expanded memory of modern LLMs and the efficiency of KV caching, organizations can achieve up to 40x faster generation times and 70% reductions in compute costs for stable knowledge tasks. As context windows continue to grow, CAG will likely become the standard architecture for domain-specific AI applications that prioritize speed and reliability over massive, ever-changing data scales.

Frequently asked questions

How does CAG reduce costs compared to RAG?

CAG reduces costs by eliminating the need to run embedding models and vector database queries for every single user interaction. While the initial preloading pass has a cost, the per-query computational requirement is lower because the model reuses precomputed attention states.

Can CAG handle real-time data updates?

No. CAG is designed for static or semi-static datasets. If your data changes frequently, you must either regenerate the cache (which takes time) or use a RAG architecture that fetches the most recent data from a live database.

What is the maximum data size for a CAG system?

The limit is defined by the LLM’s context window. For a model with a 128,000-token limit, you can store approximately 95,000 words. For a model with a 1,000,000-token limit, you can store nearly 750,000 words (the size of multiple large novels).

Does CAG improve accuracy?

CAG can improve accuracy in tasks requiring multi-hop reasoning because the model sees the entire document set at once. RAG sometimes fails if the relevant information is split across different chunks that the retriever doesn’t select together.