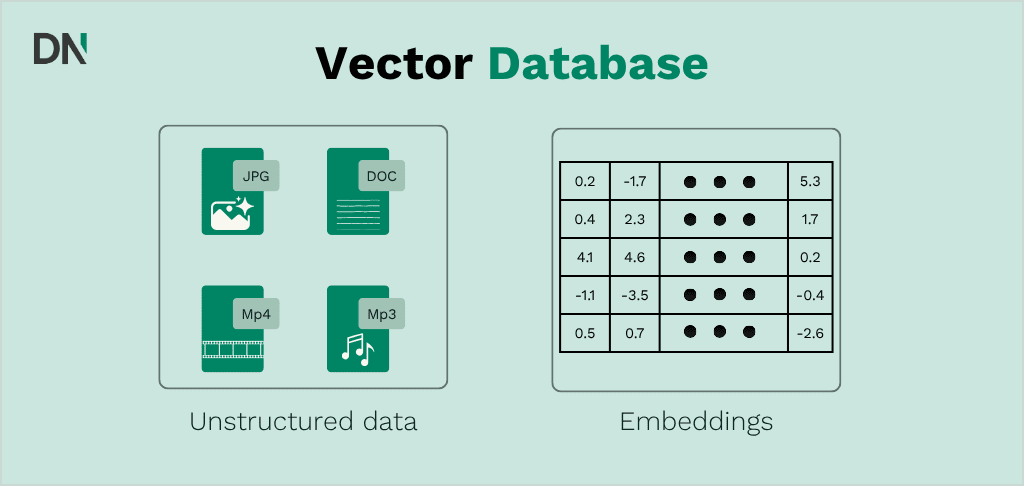

A vector database is a specialized storage system designed to index and retrieve high-dimensional data, commonly referred to as vector embeddings. In 2026, these databases serve as the fundamental memory layer for large language models (LLMs) and Retrieval-Augmented Generation (RAG) architectures. By converting unstructured data (text, images, and audio) into numerical arrays, vector databases enable enterprises to perform semantic searches based on intent and context rather than keyword matching.

The enterprise landscape has shifted from experimental pilots to production-scale AI deployments. Consequently, the selection of a vector database now centers on three core pillars: architectural scalability, multi-tenancy for data security, and the integration of hybrid search capabilities.

The role of vector databases in the AI stack

Vector databases function by mapping data points into a continuous geometric space. When a query is initiated, the database calculates the mathematical distance between the query vector and the stored vectors to find the most relevant information. This process is essential for AI strategy development as it allows organizations to utilize their proprietary data within generative AI workflows.

In the current enterprise environment, vector databases are no longer standalone tools. They are integrated components of an end-to-end data pipeline. For organizations considering an AI implementation, the database choice impacts search latency, cost-to-serve, and the accuracy of AI-generated responses.

A notable 2026 trend is consolidation: many organizations are reconsidering whether a dedicated vector database is necessary at all. For most RAG use cases under roughly 5 to 50 million vectors, pgvector inside an existing PostgreSQL setup performs adequately for many teams and eliminates the need to introduce a separate specialized system. This makes pgvector a serious option worth evaluating before committing to a dedicated solution, and it is covered in its own section below.

Pinecone: The serverless standard for managed scaling

Pinecone has positioned itself as the primary managed service for enterprises prioritizing operational simplicity. Its serverless architecture remains the industry benchmark for decoupling storage from compute, allowing users to pay only for the data they query.

Technical architecture and performance

Pinecone Serverless utilizes a tiered storage approach. It stores the primary vector index on low-cost object storage (like S3) and fetches only the necessary segments into high-speed cache during a search. This results in a reduction of overhead costs by up to 50 times compared to traditional pod-based architectures (based on Pinecone’s own estimates).

Key technical specifications include:

- Indexing algorithm: Proprietary graph-based indexing optimized for low-latency retrieval.

- Scalability: Supports billions of vectors without requiring manual sharding.

- Metadata filtering: Allows for real-time filtering of results based on key-value pairs (e.g., date, region, or department).

- Hybrid search: Pinecone supports sparse-dense hybrid search via proprietary sparse encoding. This differs from the standard BM25 implementations used by open-source alternatives, but provides meaningful hybrid search functionality for most enterprise use cases.

- Pinecone Assistant: Reached general availability in January 2025. This product wraps chunking, embedding, vector search, reranking, and answer generation behind a single endpoint, shifting Pinecone beyond pure vector storage into a more complete managed RAG solution.

Enterprise use cases and data sovereignty

Organizations often choose Pinecone when they require a custom AI solution that must scale rapidly without a dedicated DevOps team. It is frequently used in global customer support bots where uptime and managed infrastructure are critical. Pinecone holds SOC 2 Type II, ISO 27001, GDPR alignment, and HIPAA attestation certifications, making it a common choice in regulated sectors such as finance and healthcare.

On data sovereignty: Pinecone’s BYOC (Bring Your Own Cloud) mode reached general availability in 2024, allowing clusters to run inside a customer’s own AWS, Azure, or GCP account. This provides hard data isolation for organizations with strict compliance requirements and meaningfully changes the on-premise calculus compared to earlier years when Pinecone was only available as a public cloud service.

Weaviate: The open-source leader in hybrid search

Weaviate is an open-source vector database that emphasizes data modularity and flexibility. Its primary differentiator in 2026 is its mature and feature-rich hybrid search stack, which combines traditional keyword matching with modern vector embeddings.

Core features and modularity

Weaviate is built around a “class” system, similar to object-oriented programming, which makes it intuitive for software engineers to integrate. It supports a wide range of embedding models through built-in modules.

Key performance drivers include:

- Hybrid search: Merges vector results with keyword results using Reciprocal Rank Fusion (RRF) and the more advanced Relative Score Fusion (RSF), which retains the nuances of the original search scores rather than just rank order, offering potentially higher fidelity rankings. BlockMax WAND reached general availability in 2025, making the keyword search component roughly 10 times faster than in previous versions.

- Multi-vector support: MUVERA encoding now handles multi-vector documents natively, which is relevant for complex document structures.

- Knowledge graph capabilities: Weaviate is the only major vector database that natively combines vector search with a knowledge graph layer, enabling applications that need to represent and traverse structured relationships between entities alongside semantic similarity.

- Multi-tenancy: Offers robust isolation for different users or clients within a single cluster, a requirement for SaaS providers.

- Deployment flexibility: Can be deployed on-premise, in a private cloud, or via Weaviate Cloud Services (WCS).

Application in specialized industries

Weaviate is often highlighted for its ability to handle complex schemas. Its open-source nature allows for deep customization, which is beneficial for industries with strict data sovereignty requirements. Organizations whose core query pattern is “find semantically similar documents that also mention a specific term or statute” will find Weaviate’s hybrid search implementation the most mature available in 2026.

Qdrant: High-performance search for resource-efficient systems

Qdrant is written in Rust, a programming language known for memory safety and high performance. Qdrant is the preferred choice for enterprises requiring high throughput and low resource consumption, particularly in edge computing or high-frequency data environments.

Technical efficiency and quantization

Qdrant focuses on scalar quantization, a process that compresses 32-bit floating-point vectors into 8-bit integers. This reduces memory usage by up to 4x while maintaining search accuracy above 99%.

Detailed technical metrics:

- Throughput: Capable of handling tens of thousands of queries per second (QPS) on standard hardware.

- API support: Provides native support for gRPC and REST APIs, ensuring compatibility with diverse tech stacks.

- HNSW implementation: Utilizes a highly optimized version of the Hierarchical Navigable Small World (HNSW) algorithm for fast approximate nearest neighbor search. The ACORN algorithm, introduced in 2025, makes filtered HNSW genuinely competitive at tight filter ratios, reinforcing Qdrant’s position as the strongest option for complex metadata filtering at scale.

- Hybrid search: Since v1.9, Qdrant supports named vectors that allow a collection to hold both a dense HNSW index and a sparse inverted index. Since v1.15.2, IDF computation is handled server-side, making Qdrant a genuine hybrid search contender alongside Weaviate.

Use cases in real-time analytics

Qdrant is frequently deployed in scenarios where real-time data ingestion is required, such as fraud detection or recommendation engines for high-traffic e-commerce platforms. Organizations seeking a technical AI demo often find Qdrant’s performance-to-cost ratio to be a significant advantage.

Pgvector: The pragmatic choice for existing PostgreSQL users

Pgvector is a PostgreSQL extension that adds vector storage and similarity search to a standard relational database. It is not a dedicated vector database, but in 2026 it has become a serious infrastructure choice for a large portion of production RAG deployments.

For most use cases at moderate scale, pgvector inside an existing PostgreSQL instance performs adequately and eliminates the need to introduce and maintain a separate specialized system. Benchmarks show pgvector with HNSW indexing achieving query times under 20ms on one million vectors at recall rates above 95%, and pgvectorscale extends competitive performance to 50 million vectors and beyond.

Key advantages include:

- No new infrastructure: If your organization already runs PostgreSQL, pgvector requires minimal additional setup.

- SQL compatibility: Vectors can be queried alongside relational data using standard SQL, which simplifies application logic.

- Hybrid queries: Combining vector similarity search with structured filters (dates, categories, user IDs) is straightforward using existing SQL capabilities.

Pgvector is not the right choice for billion-scale datasets or extremely low-latency requirements at very high query volumes. In those scenarios, dedicated databases like Pinecone or Qdrant offer superior performance and specialized indexing. However, for teams making real infrastructure decisions in 2026, starting with pgvector and migrating later is a rational default path for most applications.

Milvus: The enterprise choice for billion-scale deployments

Milvus is an open-source vector database designed specifically for massive-scale workloads. Where other databases handle millions to tens of millions of vectors comfortably, Milvus is routinely deployed at hundreds of millions to billions of vectors in production across search companies, e-commerce platforms, and genomics research.

Milvus 2.6, which reached general availability on Zilliz Cloud in early 2026, adds hot/cold tiering for cost-efficient archival and marks a shift from pure vector search toward a full retrieval engine. GPU-accelerated indexing is supported natively.

The trade-off is operational complexity. Running Milvus in production means running Kafka, MinIO, etcd, and the Milvus coordination plane together. The Kubernetes operator simplifies this, but it involves significantly more moving parts than Qdrant or Pinecone. For most teams, Milvus is over-engineered unless they expect to operate at hundreds of millions of vectors or require its specific full-text hybrid search performance numbers.

Comparison of all five options

| Feature | Pinecone | Weaviate | Qdrant | Pgvector | Milvus |

|---|---|---|---|---|---|

| Model type | Fully managed (SaaS) | Open-source / Cloud | Open-source / Cloud | Open-source extension | Open-source / Cloud |

| Language | Go (Proprietary) | Go | Rust | C | Go / C++ |

| Primary strength | Zero-ops serverless | Hybrid search and schema | Performance and filtering | Simplicity for existing Postgres users | Billion-scale deployments |

| Indexing | Proprietary graph | HNSW + Inverted Index | HNSW + Quantization | HNSW / IVFFlat | HNSW, IVF, DiskANN |

| Hybrid search | Yes (proprietary sparse-dense) | Yes (BM25 + RSF, BlockMax WAND) | Yes (since v1.9) | Limited (manual setup) | Yes (Sparse-BM25) |

| GPU-accelerated indexing | No | Yes | Yes | No | Yes |

| Cloud / on-prem options | Cloud + BYOC | Multi-cloud and on-prem | Multi-cloud and on-prem | Wherever PostgreSQL runs | Multi-cloud and on-prem |

| Best for | Regulated enterprise, fast scaling | Complex data and knowledge graphs | High-throughput, complex filtering | Teams with existing Postgres | 100M+ vector workloads |

Criteria for choosing a vector database in 2026

1. Scale

For datasets under roughly 5 to 50 million vectors with moderate query volumes, pgvector is a reasonable starting point that avoids unnecessary infrastructure overhead. Above that threshold, or where query latency is critical at high QPS, a dedicated vector database is worth the additional operational complexity. For workloads at hundreds of millions of vectors or more, Milvus is the purpose-built option.

2. Data privacy and sovereignty

If data must remain within a specific geographic region or on-premise for compliance with GDPR, open-source options like Weaviate, Qdrant, or Milvus are viable, as is pgvector running on your own infrastructure. Pinecone’s BYOC option now also satisfies hard isolation requirements for organizations that prefer a managed service.

3. Operational overhead

Managed services like Pinecone remove the need for database administrators to handle sharding, backups, and index tuning. Self-hosted Qdrant or Weaviate requires an internal team to manage the underlying infrastructure. pgvector leverages existing PostgreSQL operations knowledge, which most engineering teams already have. Milvus carries the highest operational burden of the options listed here.

4. Query complexity

Applications that rely on exact keyword matches alongside semantic meaning (e.g., searching for specific serial numbers within technical manuals, or legal research combining semantic similarity with statute references) benefit from mature hybrid search. Weaviate’s implementation is the most feature-rich, with Qdrant a strong alternative for teams that also need filtering performance. Pinecone’s hybrid search works well within its proprietary format.

Implementation strategies

The transition to a vector-first data strategy requires a structured approach. Enterprises typically begin with an AI strategy session to identify which data assets will provide the highest return on investment when embedded.

Data ingestion: Clean and chunk unstructured data. Small chunks (512 to 1024 tokens) generally yield better search results.

Embedding selection: Choose a model (e.g., OpenAI’s text-embedding-3-small or Cohere’s embed-english-v3.0) that matches the specific language and domain of the data.

Index optimization: Configure HNSW parameters like efConstruction and M to balance between indexing speed and search accuracy.

Conclusion

The selection of a vector database has become a foundational decision for the enterprise AI stack, though the right answer in 2026 increasingly depends on your scale and existing infrastructure.

For most teams building RAG applications at moderate scale, pgvector within an existing PostgreSQL setup is the pragmatic starting point. Pinecone offers a seamless, serverless experience for organizations prioritizing speed to market and compliance, with BYOC now addressing data sovereignty concerns that previously ruled it out. Weaviate provides the most mature hybrid search, Relative Score Fusion, and knowledge graph capabilities for complex data environments. Qdrant delivers the highest performance and filtering efficiency for high-throughput applications, reinforced by the ACORN algorithm for tight filter ratios. Milvus is the purpose-built option for teams operating at hundreds of millions of vectors where other databases start to strain.

For organizations unsure of the optimal path forward, an AI workshop can help evaluate these tools against specific business requirements and data architectures.

Frequently Asked Questions on Vector Databases(FAQ)

What is the difference between a vector database and a traditional SQL database?

Traditional SQL databases use tables and rows to store data and rely on exact matches for queries. Vector databases store data as high-dimensional points (vectors) and use mathematical algorithms to find “similar” data based on context and meaning rather than exact text matches.

Can I use a regular database like PostgreSQL as a vector database?

Yes. The pgvector extension allows PostgreSQL to store and search vectors, and for many production RAG applications it performs adequately. Benchmarks show competitive performance up to tens of millions of vectors with the right configuration. For billion-scale datasets or extremely low-latency requirements at very high query volumes, specialized databases like Pinecone or Qdrant typically offer superior performance and indexing.

How does vector database pricing work?

Pricing is generally split between storage (the amount of data indexed) and compute (the number of queries processed). Serverless models, pioneered by Pinecone, have become the standard, where you pay only for active usage rather than keeping a dedicated server running 24/7.

Why is metadata filtering important?

Metadata filtering allows you to narrow down a vector search using traditional criteria. For example, you can ask the system to find all legal documents similar to a given one, but only from the year 2025. This significantly increases the relevance of the retrieved information.

Do I need a vector database if I only have a small amount of data?

For very small datasets (under 10,000 documents), local vector libraries like FAISS may be sufficient. For small to mid-sized production datasets, pgvector is often the most practical option. A dedicated vector database is recommended when you require high performance at scale, strict latency requirements under heavy query loads, or advanced hybrid search features out of the box.